802 Program Evaluation

Program Evaluation Design for Junior M.Y. Loft - Town of Morinville Youth Program

The town of Morinville is located in northern Alberta, Canada. It has a population of 9893 residents and is located in a rural area 15 minutes from the city of St. Albert. The town has experienced a increase in population in the last 10 years. The town currently has 3 new residential areas being developed and will be adding an elementary and a middle school within the next 3 years. Many families have moved to Morinville due to its affordable homes, taxes and location close to local major employers: the oilfield, military and the city.

Recently the town has been working to improve its amenities. A new community cultural centre constructed in 2011 and the town offices and library were re-built in 2012. New pathways and botanical elements have been added to residential neighbourhoods. The town is working towards building a community recreation centre with a new hockey arena and other sporting elements. The demand for increased access to these amenities is coming from residence within the town and surrounding areas and is being support by other local municipalities.

To learn more about the town of Morinville visit: http://www.morinville.ca/community/about-morinville

Recently the town has been working to improve its amenities. A new community cultural centre constructed in 2011 and the town offices and library were re-built in 2012. New pathways and botanical elements have been added to residential neighbourhoods. The town is working towards building a community recreation centre with a new hockey arena and other sporting elements. The demand for increased access to these amenities is coming from residence within the town and surrounding areas and is being support by other local municipalities.

To learn more about the town of Morinville visit: http://www.morinville.ca/community/about-morinville

Program Description: |

|

The Junior Morinville Youth Loft (Jr. M.Y. Loft) is a free after school club for students in grades 3-5 to experience the opportunities offered to the older youth program and is run under the Morinville Youth Program. It runs Mondays from 3:30-5:30pm at the Morinville Community Cultural Centre (MCCC). It offers organized activities such as cooking, gym games, crafts, minute to win it challenges and free time in the loft space with video games, foosball, and traditional board games. The program maintains a paid staff to student ration of 1-on-12 as per the provincial standard, but typically their ratios usually run a little lower.

Program History and Focus: This program was created after the idea was brought forth by a student who was too young to attend our Sr. Youth program. It began as a sampler program to see if the intended demographic had need of it. It was designed to parallel the Sr. Youth program but adjusted to meet the needs and levels of a younger age group. The four pillars of our program structure are Leadership, Recreation, Healthy Well-being, and Volunteerism. Through these, we incorporate opportunities for enhancing self-awareness, youth development and personal growth. (Erin Debusschere, Morinville Youth Program Coordinator) The goals of the Jr. M.Y. Loft program is to provide a safe, supervised, and welcoming space at no cost to the participants, as to not provide a financial deterrent to participate. We aim to provide to the Jr. Youth the same opportunity for access to Critical Hours* programming as the older demographic. Our goal is to also offer a window into what youth programming for the older demographic looks like to encourage participation in that program once they have aged out of the Jr. Youth Program. Dedicated staff and volunteers encourage inclusion, healthy competition, and socializing among youth of all ages. Our programs also include a healthy eating and physical literacy aspects in partnership with ParticipACTION. *Critical Hours Programming targets youth during time frame of after school to before super. While youth are unsupervised during this time period, there is a higher chance for this specific demographic to be exposed to potential at risk activities. By offering our program during that time frame, we aim to help decrease the risk to youth in our community. (Information provided by Erin Debusschere, Morinville Youth Program Coordinator) The program requires the loft, hall, kitchen and meeting spaces of the MCCC. It also requires a budget for staffing and supplies for activities in the program. They also utilize the Ray MacDonald centre during the summer months of our program. The program uses the local recreation guide, town website, posters/flyers and social media to advertise the program. Parents are required to register their students for the program with the town's community recreation programs. The program has two full-time youth workers, one designated lead of program planning and one designated lead of the Morinville Youth Leadership Program. Both of them facilitate our youth programs, along with two other part-time youth workers. Our operational budget comes from the Family and Community Social Services (FCSS) budget allocated to the Town of Morinville by the provincial government. The primary stakeholders that are currently associated with the Jr. M.Y. Loft program are youth in grades 3-6 and their parents, the youth program staff, Morinville Town Council, and the Morinville Youth Council. Other potential stakeholders who may take interest in the program but currently are not directly connected are the local elementary and middle schools, parents of children (any age), local businesses and other user groups of the MCCC where the program takes place. The primary stakeholders will be the focus of our evaluation and since Morinville Town Council is the stakeholder requesting the evaluation they will also contribute to the program purpose and description and will assist in providing the main evaluation questions in cooperation with youth program coordinator, Erin Debusschere. To learn more about the day-to-day activities of M.Y. Loft visit their Facebook page at: https://www.facebook.com/MorinvilleYouth/ Program Evaluation Purpose:The purpose of the Jr. M.Y. Loft program evaluation is twofold. One, Morinville Town Council and FCSS are providing funding to the Jr. M.Y. Loft program and will require information to ensure that the program is meeting its goals. Secondly, council members would like to ensure that the program is addressing the needs of youth within the community. The focus questions were created by collaboration between the program evaluator, and the key stakeholders: the Morinville Youth Council Director and Morinville Town Council.

The focus questions for the program evaluation are: Comprehensiveness of Policy Description:

Quality of Delivery and Participant Responsiveness:

- Activities provided at the program: Are the activities being offered at Jr. M.Y. Loft the activities that Jr. youth are looking for at the Jr. M.Y. Loft program? - Staff at the program (Do youth feel connected with staff? Do parents feel staffing are providing necessary supervision and leadership?)

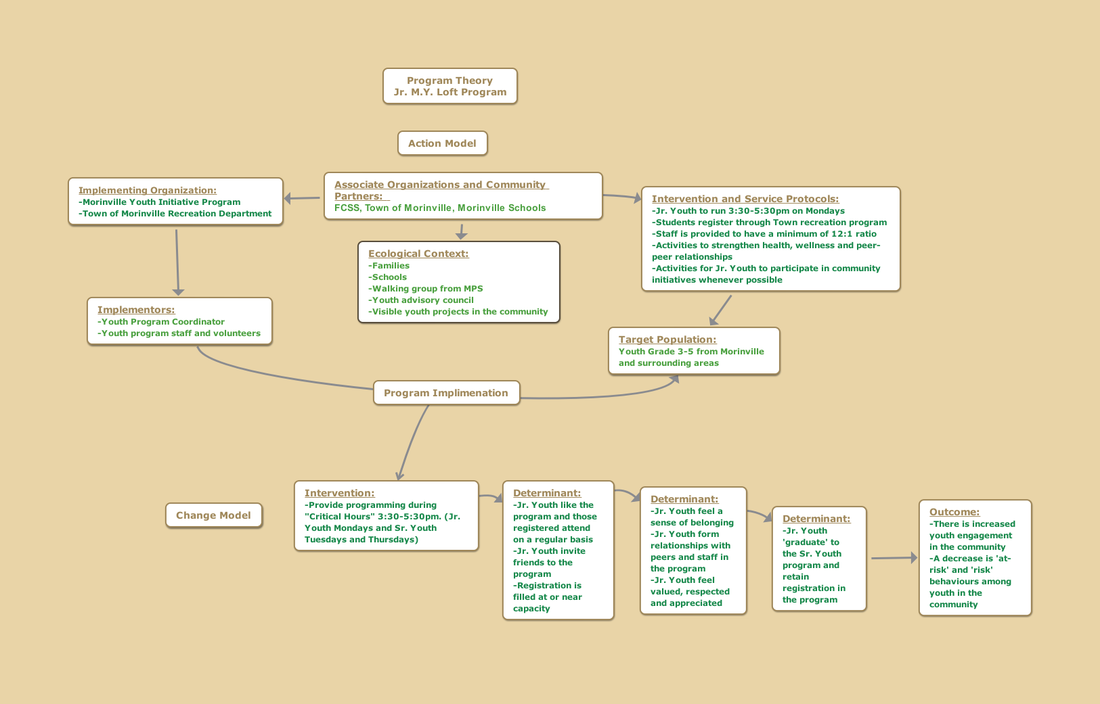

Where to collect the information to answer these questions, and definitions of key terms such as 'at-risk and 'satisfied', will be agreed upon and collected through various stakeholder meetings with the Morinville Youth Council, Morinville Town Council, RCMP representatives, FCSS and Social Services representatives and other stakeholders that may wish to participate, such as school board trustees or other youth based agencies in the community. For more information on how data will be collected to answer these focus questions please refer to the "Data collection methods and strategies" section below and view the planning document here. Program Theory:The Jr. M.Y. Loft program theory is depicted in the Action and Change Model below. The Action Model outlines the "who, what, where, when, and how" of the program. The "results" or aspired goals of the program are represented in the Change Model. To view the image larger simply right click and open in a new browser window.

Action Model and Change Model:

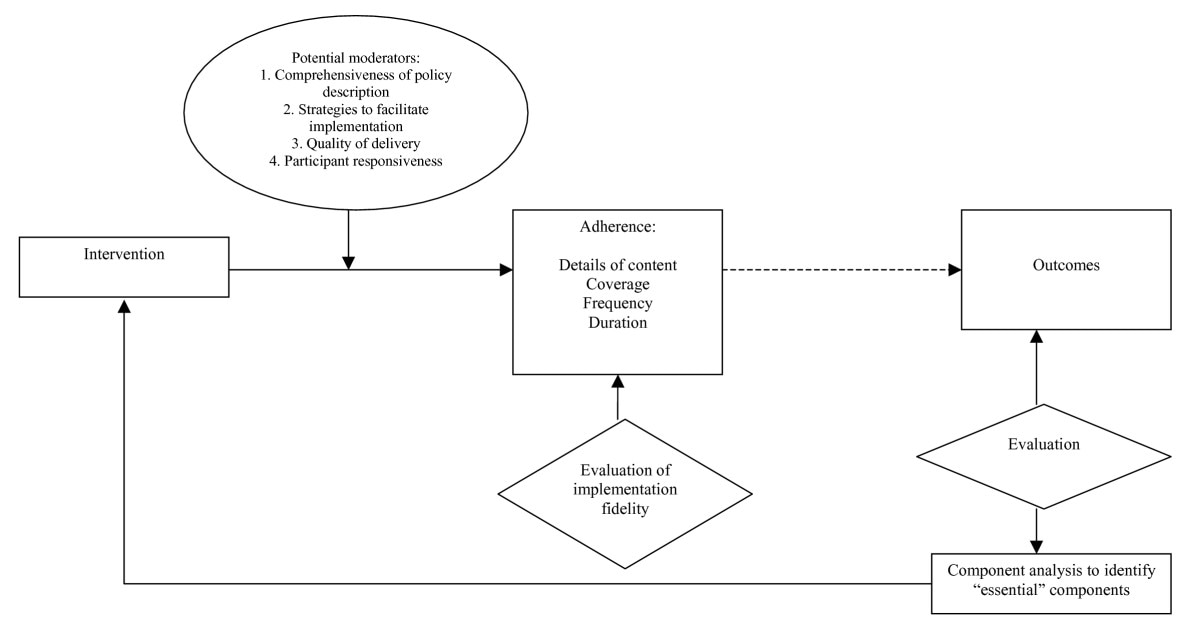

Chen, H. (2005). A conceptual framework of program theory for practitioners. In Practical program evaluation (pp. 15-43). : SAGE Publications Ltd doi: 10.4135/9781412985444.n2 Evaluation Method and Approach:drive.google.com/file/d/0B7_hDUJ7NrxyVG85Rl8yeGRKTFE/view?usp=sharingI will be using a form of Assessment-oriented process evaluation for the program evaluation of the Jr. M.Y. Loft program. As Chen Huey-Tsyh explains, when external stakeholders (funding agencies, decision makers) and/or internal stakeholders want to know how well a program is being implemented, an assessment-oriented process evaluation is called for. (Huey-Tsyh Chen, pg. 159). There are two kinds of assessment-oriented process evaluation: Fidelity evaluation and Theory-driven process evaluation.

"The tenet of theory-driven evaluation is that the design and application of evaluation needs to be guided by a conceptual framework called program theory (Chen 1990, 2005). Program theory is defined as a set of explicit or implicit assumptions by stakeholders about what action is required to solve a social, educational or health problem and why the problem will respond to this action. The purpose of theory-driven evaluation is not only to assess whether an intervention works or does not work, but also how and why it does so. The information is essential for stakeholders to improve their existing or future programs." R. Strobl et al. (Hrsg.), Evaluation von Programmen und Projekten für eine demokratische Kultur, DOI 10.1007/978-3-531-19009-9_2, © Springer Fachmedien Wiesbaden 2012 Thus specifically the Theory-driven process evaluation will be the best suited evaluation method for the Jr. M.Y. Loft program since the Morinville Town Council evaluation request is related to continued funding and to understand how well the program was implemented and how well it is working for stakeholders of youth and parents of youth. The program evaluation will use the evaluation focus questions listed above to drive the evaluation as well as use the Conceptual framework for Program Theory to help determine how well the implementation of the Jr. M.Y. Loft program is going. Furthermore program evaluators, in collaboration with stakeholders, will consider the following questions:

The program evaluators will research and question: -What is considered 'at-risk' behaviours in the context of the Morinville Community and by the Program Stakeholders? -Is there evidence or data available to support that there has been an impact on 'at-risk' behaviours? -Is there evidence to determine how many of the youth in the program would be considered 'at-risk'. -Is there evidence or data on how many 'at-risk' youth are currently in Morinville area? -Is there evidence or data to support an increase is self-awareness, youth development and personal growth, due to involvement in the program? Data collection methods and analysis strategies:Program evaluators will work collaboratively to gain data and evidence from the following stakeholders:

-Jr. Youth Loft participants -Sr. Youth Loft participants -M.Y. Loft program staff -Parents of Participants -Youth council -School based youth liaison staff (RCMP officers, teachers, social workers if applicable) The evaluation process will also seek to answer the evaluation focus questions though focus group meetings, interviews and on-site visits. Surveying (online or paper format) may also be used, especially those who may not be available to attend focus group meetings or interviews. To address the questions related to Comprehensiveness of Policy the program evaluators and stakeholders will have focus meetings and host collaborative questioning meetings with the Morinville Youth director, staff and the Morinville Youth Council. To address the questions of Program Implementation Strategies and Program Implementation program evaluators will seek out program information from the Town of Morinville Recreation Department to collect data on program registrations and enrolment information. Program staff, participants of the program and parents of participants may also be surveyed to collect any data not available through the registration records. The local schools may also be consulted for their perspectives. To address the Quality of Delivery and Participant Responsiveness the evaluation process will host several focus group meetings, interviews and on-site visits that will engage all stakeholders in discussions around the focus questions specifically related to the quality of delivery of the program. Every effort will be made to ensure that stakeholders have several opportunities to participate. Online and paper surveys will be provided to anyone who may not be able to attend focus groups or interviews, or who may not wish to share in a public setting. Once all of the data collection has been completed then the program evaluator in collaboration with the Morinville Youth Coordinator, Morinville Town Councillors, Morinville Youth program staff, Morinville Youth Council members and parent representatives, will review the data in relation to the evaluation focus questions. Every effort will be made to understand the data in context of the community and to apply it to the focus questions. These questions and the data used to answer them will be used to build an understanding of how the Jr. M.Y. Loft program has been implemented in the community over the 2016-2017 program season. This will help the program stakeholders understand what worked well, what was lacking and what they can learn from the initial implementation and what they can do moving forward. If necessary the collaborative evaluation team may also readdress the focus questions at the data analysis stage if they feel the data collected is not providing the information needed for the program evaluation. Approach to enhance evaluation use:Ensuring that the program evaluation positively impacts the Jr. M.Y. Loft program is paramount. To enhance evaluation use the program evaluator will build collaboration and act as a facilitator for stakeholders from the very beginning of the evaluation. The stakeholders are the ones who will have the unique perspectives necessary to best understand the implementation of the program thus far and how it might be improved.

To start the evaluation process the program evaluator will work with the stakeholders to articulate a description of their program, its goals and the reason for their evaluation. As the program evaluator begins the program design they will consult with the stakeholders on the process as it develops. Once the program evaluation is underway the stakeholders will be involved in the data collection and analysis as described above. Having the data collection and analysis done collaboratively with the various stakeholders of the program will ensure that everyone involved has an equal opportunity speak to the strengths and weakness of the program implementation. By honouring their voice as 'data' and analyzing it in relation to one another we can validate each others perspectives while understanding how the implementation has impacted each stakeholder. Finally, the program evaluator will work as a facilitator so that the stakeholders can analyze the data to create an understanding and then transform this understanding into recommendations, in the form of a report, for the Morinville Youth Program. The Morinville Youth Program and the Morinville Youth Council will then be further guided by the program evaluator to understand what they learned about the initial stage of the Jr. M.Y. Loft program and to apply the recommendations created by the stakeholders to better improve the implementation of the program for the 2017-2018 program season. Commitment to Standards of Practice:Created in 1975, the Joint Committee is a coalition of major professional associations in the United States and Canada concerned with the quality of evaluation. The Joint Committee has published three sets of standards for evaluations that are now widely recognized: The Personnel Evaluation Standards (2nd Ed.), The Program Evaluation Standards (3rd Ed.) and The Classroom Assessment Standards for PreK-12 Teachers.

The Junior M.Y. Loft program will meet The Program Evaluation Standards in the following ways: Utility Standards The utility standards are intended to increase the extent to which program stakeholders find evaluation processes and products valuable in meeting their needs.

The feasibility standards are intended to increase evaluation effectiveness and efficiency.

The propriety standards support what is proper, fair, legal, right and just in evaluations.

Accuracy Standards The accuracy standards are intended to increase the dependability and truthfulness of evaluation representations, propositions, and findings, especially those that support interpretations and judgments about quality.

The evaluation accountability standards encourage adequate documentation of evaluations and a metaevaluative perspective focused on improvement and accountability for evaluation processes and products.

The American Evaluation Association recommends that all program evaluators and evaluations should follow the Guiding Principles for Evaluators created by the AEA in 1995. These principals are: Systematic Inquiry, Competence, Integrity/Honesty, Respect for People, and Responsibility for General and Public Welfare. The above program evaluation of the Jr. M.Y. Loft program aims to meet these guidelines in the following ways:

Program Evaluation: Highlights of LearningBelow is a collection of work done for the purpose of learning and discussion throughout the PME 802 course.

Discussion: Program Evaluation Responses Program evaluation is the systematic application of scientific methods to assess the design, implementation, improvement or outcomes of a program (Rossi & Freeman, 1993; Short, Hennessy, & Campbell, 1996). Program evaluation can be broken down or accomplished via 3 types of evaluation: process, impact and outcome. Process and impact evaluation are ways for programs to ensure that they are on target to reach their goals and program objectives. The Rural Health Information Hub of the US Health & Human Services defines these evaluation types as, “Process evaluation can help identify strategies to improve the quality and delivery of a program. It can, in some cases, provide a context for identifying more immediate signs of program effectiveness. Impact evaluations measure the immediate and short-term changes made possible by program activities. This type of evaluation assesses the degree to which program objectives and goals were met” (2017, RHIh-US). Our school has a Reggio Emilia program which recently conducted a program evaluation. We used process evaluation to examine how teachers throughout the school were adopting/exhibiting this program in their teaching. We asked teachers to explain their understanding of the program and provide examples of how they were implementing it into their teaching. The result of this evaluation was that some teachers had understanding of the core values but new staff felt under trained to bring this program into their teaching. In response we were able to develop PD for new staff so that they would be more equipped to deliver the program. We used impact evaluation to determine how the program was affecting student and parent satisfaction with the school, which is one of the main goals of the program. We developed questionnaires for students and parents. When we analyzed these questionnaires we were able to determine which areas of Reggio were providing the most satisfaction to students and parents and which areas were having little to no impact. We determined this was because some aspects of the program were being communication more than others. We were then able to work with teachers to improve their communication to parents to ensure that all areas of the program were receiving equal attention. Reference: https://www.ruralhealthinfo.org/community-health/health-promotion/4/types-of-evaluation Connecting with the Program Evaluation Community via the AEA365 Blog. Theory Driven Evaluation I read Internal Eval Week: A tool for helping staff translate results into action by Jay Szkola (http://aea365.org/blog/) from February 5, 2017. The focus of this article was how to help staff use results from program evaluation into action for program improvement. A quick browse through the blog and I could see that this was a popular topic on the blog. There were many posts regarding how to make results, especially in the form of data, accessible and meaningful for programs. I enjoyed this particle blog post more than the others on the topic because it seemed the most direct and applicable. I enjoyed how the author shared his experience with the community-based youth justice programs and his 'cool trick' of creating an Excel sheet (Szkola, 2017). This simple Excel sheet helped staff easily input data results and get immediate feedback that they could use to adjust the program immediately. This seemed to address the concern of a program's needs changing faster than the evaluation process can accommodate. (Flores and Paul, 2017). It is very important for programs to be able to utilize program evaluation data in a way that is accessible and understandable to them so they can immediately begin to improve their program. This blog also supports the ideas present in Huey's book. Generally speaking, the earlier that program evaluation techniques are incorporated in the planning of a program, the easier it becomes for the directors and implementers to improve the new program using evaluation feedback (Huey, 2005, pg.71). Reviewing Jack Mills I found the AEA365 blog to be a very interesting blog and took sometime to explore the blog before diving into the articles. I liked how simple the blog was! It also had a wide variety of authors from different professional communities and context which gave the blog a very collaborative and community feel. Jack Mills's blog post was very helpful in increasing my understanding of evaluation theory. His citation and simplification of the Donaldson and Lipsey (2006) article into 3 essentials was very helpful. He defined program theory and social science theory clearly and I appreciated the example that Mr. Mills used to explain program theory and social science view. Describing how a program, such as the undergraduate training program Mills used in his example, might use program theory verses social science theory really helped me understand the different impacts that the two theories can have on programs. In regards to program evaluation, using his example, I can see how evaluators could utilize knowledge of both theories to help program stakeholders see potential areas of improvement or success. I wish that Mr. Mills's post had also included the first essential type of theory, the theory of what makes a good evaluation, in his example. I found that he did not address the this point in his blog and I had hoped that he would. I am aware that blog posts are generally very short and so I understand the authors need to be brief. Connecting with the AEA365 blog on a personal level: I came across a great article on the AEA365 and shared my thoughts on the article with the author. Here is a link to the article and it published my comments below: http://aea365.org/blog/have-a-party-to-share-evaluation-results-by-kendra-lewis/comment-page-1/#comment-231240 Dilemmas in Evaluation Use Dilemma in Evaluation Use: How to avoid program evaluations from being a checked boxed instead of an agent for program improvement. - Monique Webb A major dilemma in evaluation use is how to prevent evaluations from being simply completed and never applied. Our video, Utilization-Focus Evaluation for Equity Focused and Gender Responsive Evaluations (Patton, 2013), highlighted this dilemma through the examples of the Rwandan Genocide and the I-35W Mississippi River Bridge collapse of August 1, 2007. Patton explains that in both these situations regular evaluations were done. In the case of Rwanda these evaluations were ignored by those presented with the evaluation reports. In the case of the I-35W bridge the evaluation data was never put to use, instead the evaluations were treated as a 'compliance activity' instead of a source for potential improvement. So how do we address this dilemma and how can evaluators design their evaluations, and evaluation practices, to create more evaluation use? The answer to the first questions is found in the study of Utilization. In their article, Evaluation Use: Theory, Research, and Practice Since 1986, authors Shulha and Cousins (1997) explain that as researchers explored evaluation use they discovered that the design of useful evaluations required much more than simply matching clients' questions with particular methods, approaches or models. More and More, evaluators were taking great pains to identify the type of use sought by program stakeholders and to custom-design evaluations that would best promote such uses. This new idea grew and evaluation began to be seen as a continuous information dialogue between evaluators and program stakeholders who both share in the responsibility for generating, transmitting and consuming evaluation information (Shulha & Cousins, 1997). It was observed that participation in evaluation gives stakeholders confidence in their ability to use research procedures, confidence in the quality of the information that is generated by these procedures, and a sense of ownership in the evaluation results and their application (as cited in, Shulha & Cousins, 1997). In other words, if stakeholders are deeply involved in the process then it is more likely an evaluation, and its data, will be effectively applied and used by a program. So how can evaluators design evaluations to promote evaluation use to address this dilemma? Michael Q. Patton (2013) suggests that evaluators should follow several guidelines to promote process use. Evaluators should always identify the primary intended users who will use the information and involve these primary users in the design and process of the evaluation. Evaluators should ensure evaluation is part of the initial program design and primary users want the information to help answer questions. Evaluators should help primary users clarify their purpose and objectives. Most importantly, evaluators should make implications of use part of every decision throughout the evaluation (Patton, 2013). Patton's ideas are being applied throughout the program evaluation community. Most recently the American Evaluation Association's blog, AEA365 posted an article by Christy Metzler that provides evidence of how she has incorporated these ideas into her practice. To help advance the work of the evaluation professional community in this area she shares her 3 'Hot Tips' to with readers: connect to business planning, make it inclusive and imbed program staff (Feb 20, 2017). However Patton's process does not come without warnings of caution either. It is important to note that in dealing so directly and collaboratively with program stakeholders evaluators could face ethical issues. For example Shulha & Cousins (1997) present the case of McTaggart (1991). In this case McTaggart altered an evaluation report when it was discovered that the information could result in severe consequences for the program stakeholder who provided the information. Patton cautions that, much like was the practice of Court Jesters of medieval times, evaluators must work with stakeholders to prepare them to be receptive to the information they may receive (2013). Program evaluators should also be sure to use standards to guide their practice and guard against misuse, such as The Program Evaluation Standards or Guiding Principles for Evaluators (Shulha & Cousins, 1997). References: Shulha, L. M., & Cousins, J. B. (1997). Evaluation use: Theory, research, and practice since 1986. Evaluation practice, 18(3), 195-208. Patton, M.Q. [MyMandE]. (2013, June 7). Utilization-focused evaluation for equity-focused and gender-responsive evaluations. [Video File]. https://www.youtube.com/watch?v=jQP1FGhxloY. Metzler, C. (2017, February 20). Cultivating a Program Staff's Inner Action Hero: Participatory Strategies that Promote Evaluation Use [Blog post]. Retrieved from http://aea365.org/blog/?s=evaluation+use&sub Practicing Logic Models:

U-Well: A University Wellness Program for Staff (To view the logic model in chart form please click here.) Program Background: This educational program was designed to support increased wellness of staff and instructors at a local university. U-Well was initially implement in response to increased sickness on campus and challenges with staff/instructor work-life balance. Staff sick days had increased by 15% in the past three years and instructors persistently reported challenges in work-life balance on the annual university staff survey over the past five years. The U-Well program was endorsed by the University’s central administration and facilitated by the on-campus Health Services Unit (HSU). Four staff members in the HSU were responsible for administering the program: the HSU director, one health professional, and two educators. The program has been operational for two years. Program Goals: The goals of the U-Well program are as follows:

The program operates every Monday from 4:00-5:00pm and Thursday from 12:00-1:00pm. Staff and instructors who have individually elected to participate in the U-Well program attend the sessions. Staff and instructor participants are from across the university and have varying backgrounds in health and fitness. Approximately 35-45 staff members attend each session. During the sessions, the U-Well facilitators engage participants in learning activities that help meet the program goals. These activities include:

The U-Well program has been in operation for two years and the University administration wants to know whether or not it is having an impact on staff and instructor wellbeing. They have hired you to conduct a program evaluation to determine the effectiveness of the U-Well program on meeting its intended goals. To begin your evaluation planning, you start by constructing a program logic model for U-Well. On the document below, you will find a logic model template that you can use. Some of the logic model is already filled-in so that you can get started easily and quickly. Your task for this assignment is to complete the empty cells of the table based on the case study information provided. When you are complete, post your logic model in the Discussion Board: Case Study Responses. |

"M.Y. Loft is a place where anyone can relax and fully express, without fear of being made to feel uncomfortable, unwelcome, or unsafe on account of their race/ethnicity, sexual orientation, gender identity or expression, cultural background, religious affiliation, age, or physical or mental ability."

-Program Coordinator Erin Debusschere

The Jr. M.Y. Loft youth participated in the Canada 150th Mosaic in February 2017. Each youth got to create their own individual tile painting that then became part of Canada's 150 Mosaic painting. The Jr. M.Y. Loft youth participated in the Canada 150th Mosaic in February 2017. Each youth got to create their own individual tile painting that then became part of Canada's 150 Mosaic painting.

"...participation in evaluation gives stakeholders confidence in their ability to use research procedures, confidence in the quality of the information this is generated by these procedures, and a sense of ownership in the evaluation results and their applications. (Ayers, 1987; Cousins, 1995; Greene, 1988)

|